Instructions to use maple-research-lab/LLaDOU-v0-Code with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use maple-research-lab/LLaDOU-v0-Code with Transformers:

# Load model directly from transformers import LLaDOUModelLM model = LLaDOUModelLM.from_pretrained("maple-research-lab/LLaDOU-v0-Code", trust_remote_code=True, dtype="auto") - Notebooks

- Google Colab

- Kaggle

metadata

base_model:

- GSAI-ML/LLaDA-8B-Instruct

language:

- en

library_name: transformers

datasets:

- KodCode/KodCode-V1-SFT-R1

Large Language Diffusion with Ordered Unmasking (LLaDOU)

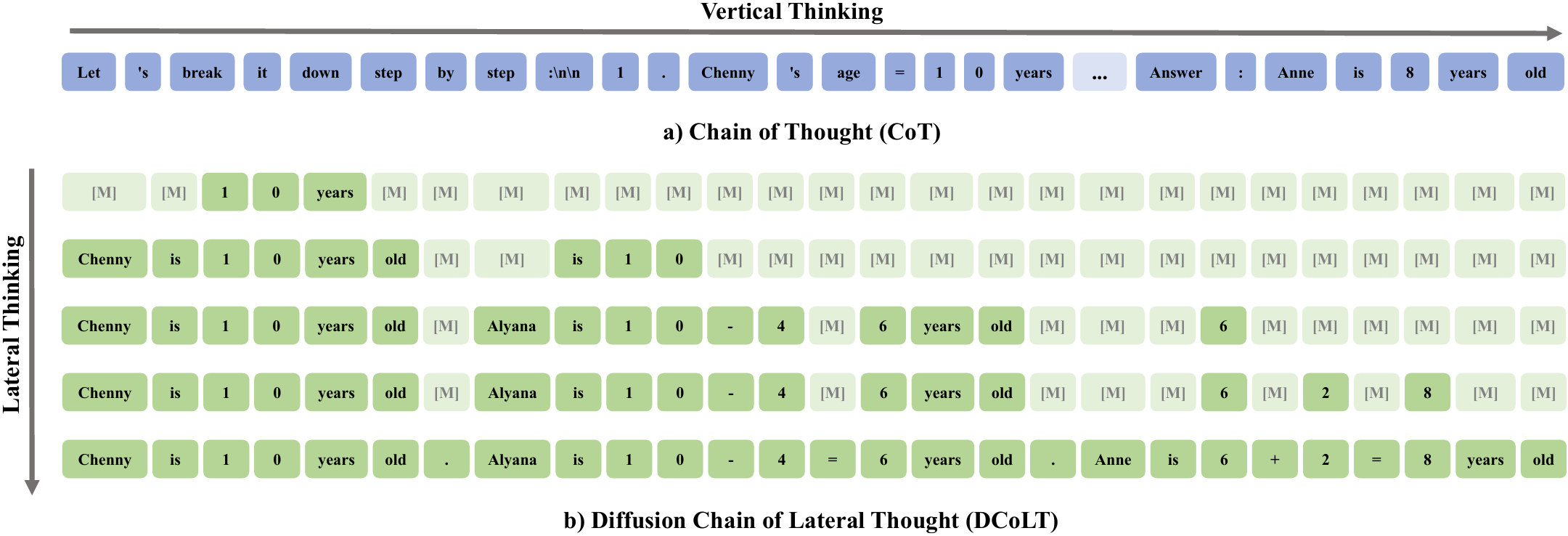

We introduce the Large Language Diffusion with Ordered Unmasking (LLaDOU), which is trained by reinforcing a new reasoning paradigm named the Diffusion Chain of Lateral Thought (DCoLT) for diffusion language models.

Compared to standard CoT, DCoLT is distinguished with several notable features:

- Bidirectional Reasoning: Allowing global refinement throughout generations with bidirectional self-attention masks.

- Format-Free Reasoning: No strict rule on grammatical correctness amid its intermediate steps of thought.

- Nonlinear Generation: Generating tokens at various positions in different steps.

Instructions

LLaDOU-v0-Code is a code-specific model trained on a subset of KodCode-V1-SFT-R1.

For inference codes and detailed instructions, please refer our github page: maple-research-lab/LLaDOU.