Omni2Sound — Unified Video-Text-to-Audio Generation

CVPR 2026 (Highlight)

Model Description

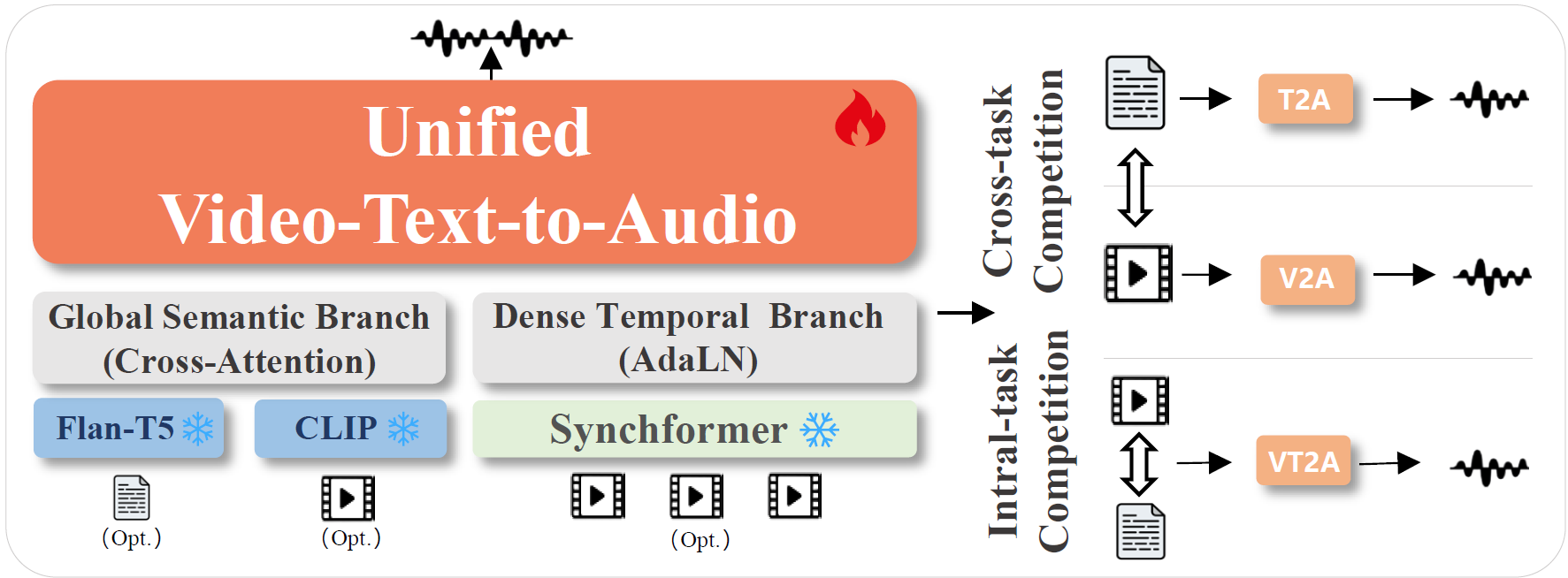

Omni2Sound is a unified framework for generating temporally aligned and semantically faithful audio from video, text, or both. A single model handles three tasks:

- VT2A (Video + Text → Audio)

- V2A (Video → Audio)

- T2A (Text → Audio)

Omni2Sound achieves state-of-the-art performance across all three tasks on the VGGSound-Omni benchmark, surpassing both previous unified models (AudioX, MMAudio) and specialized models (ThinkSound, HunyuanVideo-Foley).

Architecture

Omni2Sound is built on a standard Diffusion Transformer (DiT) backbone with a decoupled two-branch conditioning design:

- Semantic Branch ("What"): Fuses text embeddings from Flan-T5 and visual features from CLIP via cross-attention, providing high-level semantic context. For unimodal tasks (V2A or T2A), the absent modality is simply omitted — no padding needed.

- Temporal Branch ("When"): Uses a Synchformer to extract fine-grained visual-temporal features, injected globally via Adaptive Layer Normalization (AdaLN) for precise audio-visual synchronization.

The model is trained with a three-stage progressive multi-task training schedule:

- Stage 1 — Large-scale T2A pretraining on text-audio pairs

- Stage 2 — Multi-task interleaved finetuning (joint VT2A + V2A + T2A) on SoundAtlas

- Stage 3 — Decoupled robustness finetuning with off-screen synthesis and text dropout augmentations

Key Features

- Unified SOTA: A single model achieves state-of-the-art on VT2A, V2A, and T2A simultaneously

- Strong temporal control: Fine-grained audio-visual synchronization via Synchformer temporal features

- Strong semantic control: Faithful audio generation guided by text and/or visual semantics

- Robustness: Handles challenging scenarios including off-screen audio synthesis and incomplete text inputs

- Simple design: Plain DiT backbone — all gains come from high-quality data (SoundAtlas) and training strategy

Model Files

omni2sound/

├── oob_vae_16k_224410.ckpt # Audio VAE

├── synchformer_state_dict.pth # Synchformer temporal encoder

└── vt2a-24-v55vt35-oa15-mq-td15/

├── args.yaml

├── data_config.yaml

├── model_config.json

└── checkpoints/model.ckpt # DiT backbone weights

Additionally, download the following dependencies into weights/:

| Model | Source |

|---|---|

| DFN5B-CLIP-ViT-H-14-384 | apple/DFN5B-CLIP-ViT-H-14-384 |

| flan-t5-base | google/flan-t5-base |

Quick Start

git clone https://github.com/omni2sound/Omni2Sound.git

cd Omni2Sound

pip install torch==2.1.0 torchaudio==2.1.0 torchvision==0.16.0 \

--index-url https://download.pytorch.org/whl/cu121

pip install -r requirements.txt

huggingface-cli download Dalision/Omni2Sound --local-dir weights/omni2sound

# Run inference

bash scripts/infer_online.sh

See the GitHub repo for full instructions on inference and finetuning.

Links

- Paper: arXiv:2601.02731

- Project Page: omni2sound.github.io/

- Code: github.com/omni2sound/Omni2Sound

- Benchmark & Dataset: Dalision/Omni2Sound_Benchmark

- Evaluation Results: Dalision/Omni2Sound_Result

Citation

@article{dai2026omni2sound,

title = {Omni2Sound: Towards Unified Video-Text-to-Audio Generation},

author = {Dai, Yusheng and Chen, Zehua and Jiang, Yuxuan and Gao, Baolong and

Ke, Qiuhong and Cai, Jianfei and Zhu, Jun},

journal = {arXiv preprint arXiv:2601.02731},

year = {2026}

}

License

Both the code and model weights are released under CC BY-NC 4.0 (non-commercial use only).

- Downloads last month

- 55